转http://blog.csdn.net/blade2001/article/details/9065985

先确定pid:

top

找到最消耗cpu的进程15495

再确定tid:

ps -mp 15495 -o THREAD,tid,time

找到最占用cpu的进程18448

printf "%x\n" 18448

4810

打印堆栈

jstack 15495 | grep 4810 -A 30

例如发现栈如下:

"regionserver60020-smallCompactions-1438827962552" daemon prio=10 tid=0x00007f4ce1903800 nid=0xe2a72 runnable [0x00007f443b8f6000]

java.lang.Thread.State: RUNNABLE

at com.hadoop.compression.lzo.LzoDecompressor.decompressBytesDirect(Native Method)

at com.hadoop.compression.lzo.LzoDecompressor.decompress(LzoDecompressor.java:315)

- locked <0x00007f450c42d820> (a com.hadoop.compression.lzo.LzoDecompressor)

at org.apache.hadoop.io.compress.BlockDecompressorStream.decompress(BlockDecompressorStream.java:88)

at org.apache.hadoop.io.compress.DecompressorStream.read(DecompressorStream.java:85)

at java.io.BufferedInputStream.read1(BufferedInputStream.java:273)

at java.io.BufferedInputStream.read(BufferedInputStream.java:334)

- locked <0x00007f4494423b70> (a java.io.BufferedInputStream)

at org.apache.hadoop.io.IOUtils.readFully(IOUtils.java:192)

at org.apache.hadoop.hbase.io.compress.Compression.decompress(Compression.java:439)

at org.apache.hadoop.hbase.io.encoding.HFileBlockDefaultDecodingContext.prepareDecoding(HFileBlockDefaultDecodingContext.java:91)

at org.apache.hadoop.hbase.io.hfile.HFileBlock$FSReaderV2.readBlockDataInternal(HFileBlock.java:1522)

at org.apache.hadoop.hbase.io.hfile.HFileBlock$FSReaderV2.readBlockData(HFileBlock.java:1314)

at org.apache.hadoop.hbase.io.hfile.HFileReaderV2.readBlock(HFileReaderV2.java:358)

at org.apache.hadoop.hbase.io.hfile.HFileReaderV2$AbstractScannerV2.readNextDataBlock(HFileReaderV2.java:610)

at org.apache.hadoop.hbase.io.hfile.HFileReaderV2$ScannerV2.next(HFileReaderV2.java:724)

at org.apache.hadoop.hbase.regionserver.StoreFileScanner.next(StoreFileScanner.java:136)

at org.apache.hadoop.hbase.regionserver.KeyValueHeap.next(KeyValueHeap.java:108)

at org.apache.hadoop.hbase.regionserver.StoreScanner.next(StoreScanner.java:507)

at org.apache.hadoop.hbase.regionserver.compactions.Compactor.performCompaction(Compactor.java:217)

at org.apache.hadoop.hbase.regionserver.compactions.DefaultCompactor.compact(DefaultCompactor.java:76)

at org.apache.hadoop.hbase.regionserver.DefaultStoreEngine$DefaultCompactionContext.compact(DefaultStoreEngine.java:109)

at org.apache.hadoop.hbase.regionserver.HStore.compact(HStore.java:1106)

at org.apache.hadoop.hbase.regionserver.HRegion.compact(HRegion.java:1482)

at org.apache.hadoop.hbase.regionserver.CompactSplitThread$CompactionRunner.run(CompactSplitThread.java:475)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:745)

是读写hfile发生的错误,导致启动多个runnable

一个应用占用CPU很高,除了确实是计算密集型应用之外,通常原因都是出现了死循环。

(友情提示:本博文章欢迎转载,但请注明出处:hankchen,http://www.blogjava.net/hankchen)

以我们最近出现的一个实际故障为例,介绍怎么定位和解决这类问题。

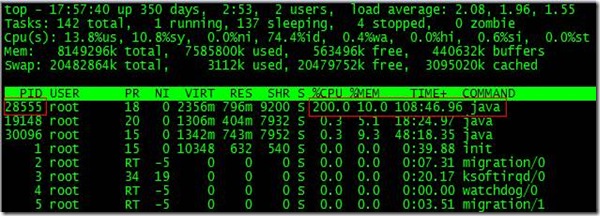

根据top命令,发现PID为28555的Java进程占用CPU高达200%,出现故障。

通过ps aux | grep PID命令,可以进一步确定是tomcat进程出现了问题。但是,怎么定位到具体线程或者代码呢?

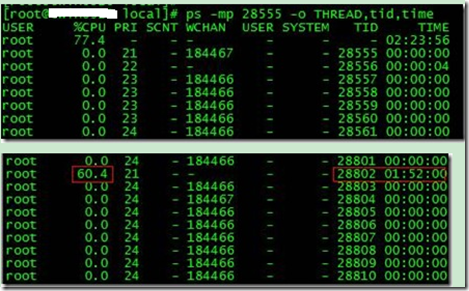

首先显示线程列表:

ps -mp pid -o THREAD,tid,time

找到了耗时最高的线程28802,占用CPU时间快两个小时了!

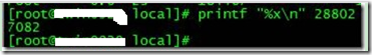

其次将需要的线程ID转换为16进制格式:

printf "%x\n" tid

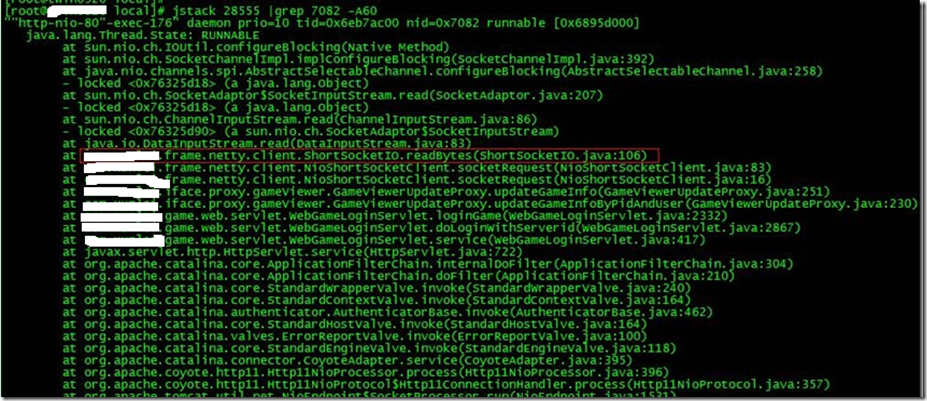

最后打印线程的堆栈信息:

jstack pid |grep tid -A 30

找到出现问题的代码了!

现在来分析下具体的代码:ShortSocketIO.readBytes(ShortSocketIO.java:106)

ShortSocketIO是应用封装的一个用短连接Socket通信的工具类。readBytes函数的代码如下:

public byte[] readBytes(int length) throws IOException {

if ((this.socket == null) || (!this.socket.isConnected())) {

throw new IOException("++++ attempting to read from closed socket");

}

byte[] result = null;

ByteArrayOutputStream bos = new ByteArrayOutputStream();

if (this.recIndex >= length) {

bos.write(this.recBuf, 0, length);

byte[] newBuf = new byte[this.recBufSize];

if (this.recIndex > length) {

System.arraycopy(this.recBuf, length, newBuf, 0, this.recIndex - length);

}

this.recBuf = newBuf;

this.recIndex -= length;

} else {

int totalread = length;

if (this.recIndex > 0) {

totalread -= this.recIndex;

bos.write(this.recBuf, 0, this.recIndex);

this.recBuf = new byte[this.recBufSize];

this.recIndex = 0;

}

int readCount = 0;

while (totalread > 0) {

if ((readCount = this.in.read(this.recBuf)) > 0) {

if (totalread > readCount) {

bos.write(this.recBuf, 0, readCount);

this.recBuf = new byte[this.recBufSize];

this.recIndex = 0;

} else {

bos.write(this.recBuf, 0, totalread);

byte[] newBuf = new byte[this.recBufSize];

System.arraycopy(this.recBuf, totalread, newBuf, 0, readCount - totalread);

this.recBuf = newBuf;

this.recIndex = (readCount - totalread);

}

totalread -= readCount;

}

}

}

问题就出在标红的代码部分。如果this.in.read()返回的数据小于等于0时,循环就一直进行下去了。而这种情况在网络拥塞的时候是可能发生的。

至于具体怎么修改就看业务逻辑应该怎么对待这种特殊情况了。

最后,总结下排查CPU故障的方法和技巧有哪些:

1、top命令:Linux命令。可以查看实时的CPU使用情况。也可以查看最近一段时间的CPU使用情况。

2、PS命令:Linux命令。强大的进程状态监控命令。可以查看进程以及进程中线程的当前CPU使用情况。属于当前状态的采样数据。

3、jstack:Java提供的命令。可以查看某个进程的当前线程栈运行情况。根据这个命令的输出可以定位某个进程的所有线程的当前运行状态、运行代码,以及是否死锁等等。

4、pstack:Linux命令。可以查看某个进程的当前线程栈运行情况。

(友情提示:本博文章欢迎转载,但请注明出处:hankchen,http://www.blogjava.net/hankchen)

相关推荐

NULL 博文链接:https://ainn2006.iteye.com/blog/1549724

线上故障排查方法记录

Java线上故障排查方案(2).pdf

Java线上故障排查方案 结合实战,超详细Java线上故障排查方案总结,值得收藏

JAVA 线上故障排查完整套路,从 CPU、磁盘、内存、网络、GC 一条龙!.docx

JAVA线上问题排查和工具 内容详细 结合实际工作 贴合实际

主要介绍了JAVA线上常见问题排查手段(小结),小编觉得挺不错的,现在分享给大家,也给大家做个参考。一起跟随小编过来看看吧

java进程高CPU占用故障排查

Java线程CPU占用高原因排查方法,Java线程CPU占用高原因排查方法

线上故障排查全套路,总有一款适合你线上故障排查全套路,总有一款适合你来自:fredal的博客链接:https://fredal.xin/java-error-c

java cpu 内存占用高 问题 模拟并排查 https://blog.csdn.net/jiankunking/article/details/79749836 https://blog.csdn.net/jiankunking/article/details/79749483

当用户量过大,或服务器性能不足以支持大用户量,但同时又得不到扩容的情况下,进行性能分析,并对系统、应用、程序进行优化显得尤为重要,也是节省资源的一种必不可少的手段。目前大多数运维产品都基于JAVA语言开发...

CPU占用过高是LINUX服务器出现常见的一种故障,也是程序员线上排查错误必须掌握的技能,我们经常需要找出相应的应用程序并快速地定位程序中的具体代码行数,本文将介绍一种CPU占用过高的一种处理思路,文中采用四...

Linux CPU占用率高故障排查.docx

主要介绍了JAVA线上常见问题排查手段汇总,文中通过示例代码介绍的非常详细,对大家的学习或者工作具有一定的参考学习价值,需要的朋友可以参考下

一款线上监控诊断产品,通过全局视角实时查看应用 load、内存、gc、线程的状态信息,并能在不修改应用代码的情况下,对业务问题进行诊断,包括查看方法调用的出入参、异常,监测方法执行耗时,类加载信息等,大大...

大厂高手骆俊武出品的《漫谈线上问题排查》电子书

APM应用监控故障排查.pdf DAM软件分发故障排查.pdf EAD可控软件管理故障排查.pdf EAD补丁管理故障排查.pdf EAD防病毒软件故障排查.pdf iMC BIMS组件问题分析.pdf iMC双机冷备方案故障排查.pdf WSM资源管理...

802.1x与EAD故障排查 AM接口故障排查 ARP-DETECTION故障排查 BGP MPLS故障排查 BGP故障排查 BPDU Tunnel故障排查 DHCP Server故障排查 DVPN HUB-HUB DVPN HUB-SPOKE 2 DVPN HUB-SPOKE DVPN DVPN故障排查 E1POS...